What does Facebook's latest scandal reveal about online privacy?

In a recent high profile controversy, Cambridge Analytica (a political consulting firm) was given unauthorised access to 87 million Facebook user profiles. Regulators in the UK, the US, the EU and Australia have opened investigations into Facebook’s privacy practices. Some users, including the co-founder of WhatsApp, are calling on users to delete their Facebook accounts. Is Facebook’s Cambridge Analytica scandal the digital equivalent of BP’s Macondo?

People generally understand that data is powerful, but not many realise how powerful. A 2000 study by Carnegie Mellon University reported that simply by using basic 1990 census data (date of birth, gender and zip code), 87% of the US population could be uniquely identified.

Even encryption technology cannot protect your secrets forever. The arrival of quantum computing (though still years away) will theoretically break today’s commonly used cryptosystems. Hackers are allegedly already hoarding encrypted files, hoping that one day their secrets will come to light.

Given the power of user data and privacy concerns, why not just legislate that all user data is private? Put simply, this could upend business models for a swathe of technology companies, which have driven considerable innovation in the sector.

Should consumers worry about online privacy?

Most social media companies adopt an advertising funded business model – users surrender their data for targeted advertising in return for free services. Most of the data users share on social media – travel pictures, life updates, their views on current issues, etc. – they might expect others to view. Facebook’s data leak to Cambridge Analytica therefore differs from Equifax’s credit data breach. Hackers are not interested in Facebook’s data, but advertisers (and political parties) are.

The advertising industry relies on human psychology – what you see, how you feel, what you believe etc. Data like this cannot be collected, only inferred, and this is where social media comes in. Your likes on Facebook, your comments on Twitter, the photos you share, your internet searches – all this data helps advertisers to know you better. To track users online, websites deploy silent data collectors or ‘trackers’ (i.e. third party cookies); according to analytics firm Evidon, there are over 4,000 companies in the consumer-tracking ecosystem, with Google and Facebook owning the most popular trackers.

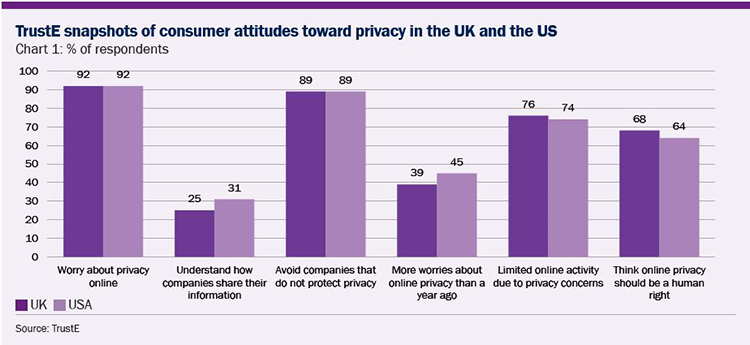

According to a report by Citi in March 2017, consumers in the UK and the US are worried about online privacy. A clear majority believe privacy should be a human right, and yet less than a third think they understand how their data is shared.

Consumers in other countries also generally share this concern. There are two main reasons for such concern: 1) users do not know how their data is being used/processed and 2) users do not understand how companies share their data. Against this backdrop, it is not hard to see why the Facebook data leak has attracted a firestorm of criticism.

Which questions should we be asking?

The critical question for shareholders is whether the latest revelations from Facebook represent a watershed moment, when governments are galvanised to introduce tougher privacy protections, thereby posing a risk to business models built around the assumption of ‘free data’.

Facebook debated internally whether or not the Cambridge Analytica incident was actually a data breach. But we believe this misses the point. We are concerned that Facebook has collected too much data from the beginning, that programmatic advertising has created filter bubbles (the algorithm limits the things you would like to see and filters out things you do not want to see), fake news and Russian meddling. We also question the company’s ability to protect users from unnecessary data sharing.

Facebook collects vast amounts of user data. The company tracks not only Facebook users online, but also non-Facebook users. The company uses cookies, social media plugins (the ‘like’ button) and pixels – all industry standard technologies – to track users’ online habits. With the ‘Partner Categories’ product, Facebook collects user data from third parties such as Acxiom, Epsilon and Experian Marketing Services to learn about a user’s offline behaviour outside Facebook. Using the data collected, Facebook creates user profiles which are then utilised by advertisers to conduct targeted advertising. Facebook’s algorithm is very effective – the Russians only spent about $100,000 on political advertising to reach up to 150 million Americans during the 2016 presidential election.

Facebook’s data collection was under scrutiny even before the latest scandal. In February 2018, a Belgium court ruled that the company broke privacy laws by tracking people on third-party websites without gaining their consent. While Facebook is appealing the ruling, it could face a fine of €250,000 a day, or up to €100 million. The company is also facing a similar lawsuit in Austria. Germany’s antitrust agency is considering banning Facebook from collecting and processing third party user data following a preliminary investigation into whether or not the company has violated Germany’s data protection laws.

Nor is Facebook’s advertising algorithm without concern. The company allows advertisers to target users based on sensitive data such as ethnic affinity and political view. Facebook has a specific service ‘politics.fb.com’ that offers political targeting. The company is likely to be under pressure to give up targeting consumers based on this kind of data.

What should shareholders expect next?

While CEO Mark Zuckerberg welcomed regulation over advertising transparency (i.e. who paid for the advertisements and upon which criteria users are being targeted), Facebook still has a number of areas that it needs to address, including more transparency to users over the data collected, and users’ ability to opt-in or opt-out of tracking in certain areas. If users do not feel safe about their data on the platform, they will share less, and engage less.

Another technology giant with a markedly similar business model – Google – has so far avoided the lime light in the Cambridge Analytica scandal. Its focus on search means users already have specific intents and are theoretically less susceptible to influence on its platform, but like Facebook, Google collects information about users and serves targeting advertising. Issues surrounding fake news and Russian election interference were found on Google’s platform too. Under the current increase in public scrutiny, more industry examples of improper data use are likely to emerge.

New regulation around privacy almost certainly lies ahead, and will impact both Google and Facebook. Europe’s General Data Protection Regulation will be a good reference as to what a future US privacy law might look like. This regulation would grant Facebook and Google users more transparency and give them more control over what data is collected and shared. The two companies’ capabilities in gathering user data could be impacted if users decide to withhold data, potentially lowering growth due to more ineffective advertising.

We believe that Facebook and Google should take more responsibility for the content on their platforms. While currently platform businesses are not liable for content, this is increasingly hard to defend, given its potentially damaging impact on society.

Finally, tech companies must re-think their governance. Most have dual-class share structures shielding the founders/management from outside influence. Many of the societal and privacy issues raised in the Cambridge Analytica scandal are not new. Has the board ever discussed these issues? Can the board hold the founder accountable? Shareholders expect checks and balances in the boardroom, and a dual class share structure does not offer outsiders this assurance.